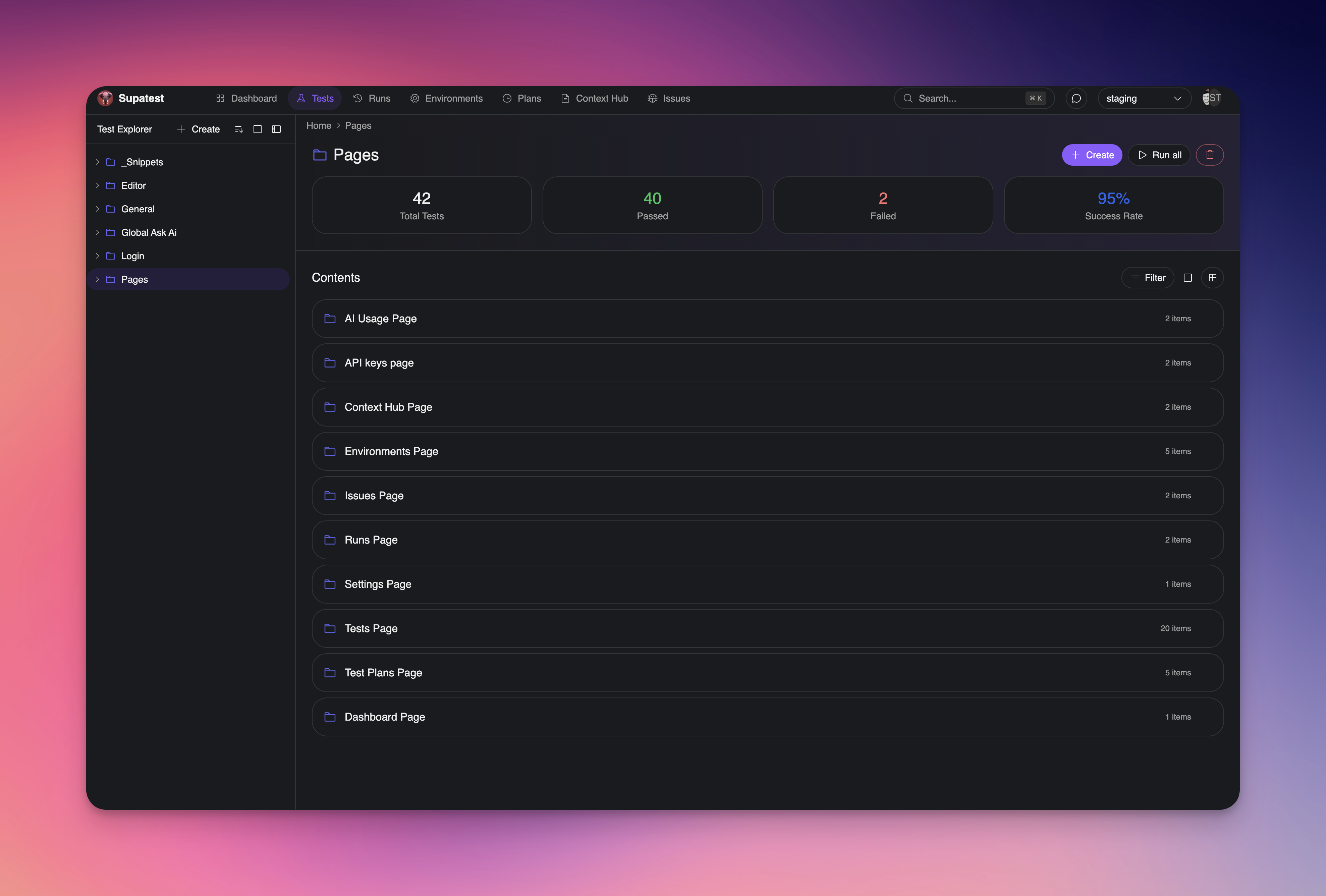

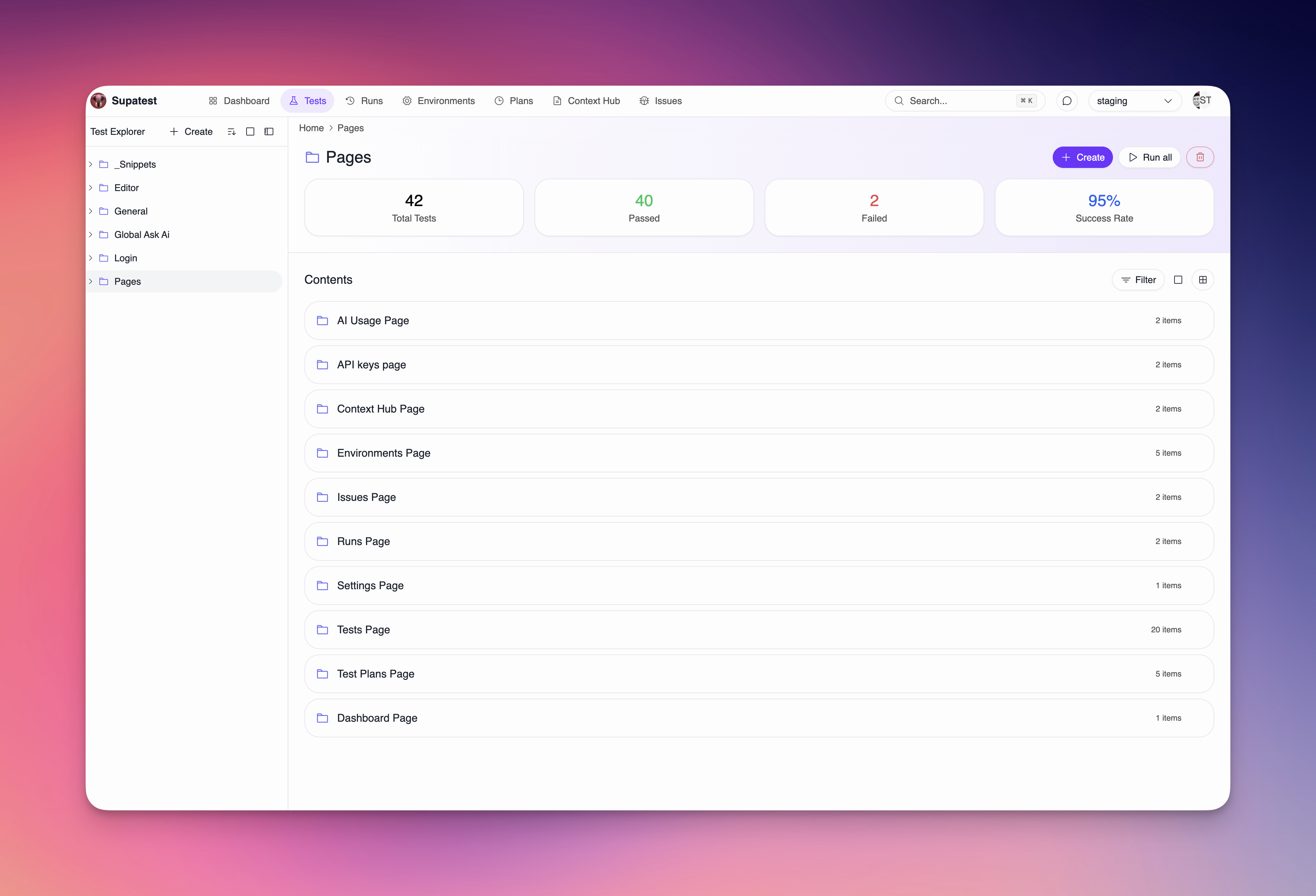

Overview

Use the Test Explorer to create, organize, and manage test cases and snippets. Structure tests in folders, move items with drag and drop, and access common actions from context menus.

Folders and structure

- Home (root): Top-level container for all folders and test cases

- Folders: Nest folders to mirror product areas or teams

- Test cases: Individual automated tests

- Snippets: Reusable step groups that can be inserted into tests

Tip: Keep folder names simple and stable. Use folders for ownership (team/feature) and plans to schedule whole folders.

Creating items

The header provides quick actions:- New Test Case: Create a test in the selected folder or Home

- New Snippet: Create a reusable snippet in the selected folder or Home

- Import Test Case: Import from file

- Create Folder: Add a folder at the current level

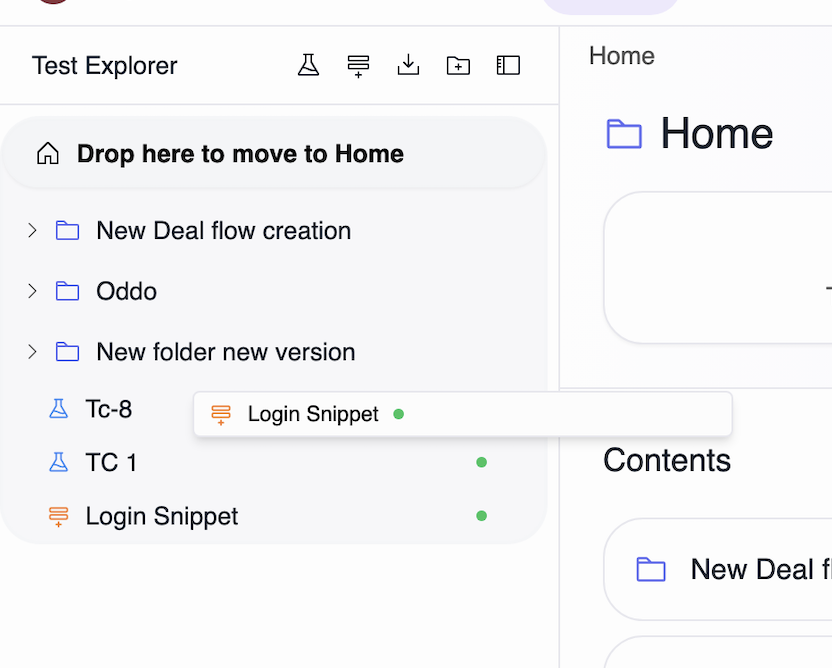

Organizing with drag and drop

- Move test cases: Drag a test case to another folder

- Move folders: Drag a folder onto another folder to re-parent it

- Move to Home: Drop on the Home area to move items to root

- Visual feedback: The drop target highlights and a compact preview follows the cursor

Context menus and actions

Right-click (or use the row menu) on folders and test cases:Folder actions

- New Test Case: Create under this folder

- New Snippet: Create under this folder

- Import Test Case: Import into this folder

- New Folder: Create a subfolder

- Rename: Update the folder’s name

- Delete: Remove the folder

Test case actions

- Edit: Open the test for editing

- Duplicate: Create a copy in the same folder

- Save as Snippet: Convert the test steps into a snippet

- Delete: Remove the test case

Snippets

- Reuse login flows, setup routines, or any repeatable sequence

- Insert snippets into tests with the Add Snippet step

- Update one snippet to propagate changes across every test that references it

- Enable Store Browser State in snippet settings to skip repeated logins. Learn more in Browser State Storage.

Test case details

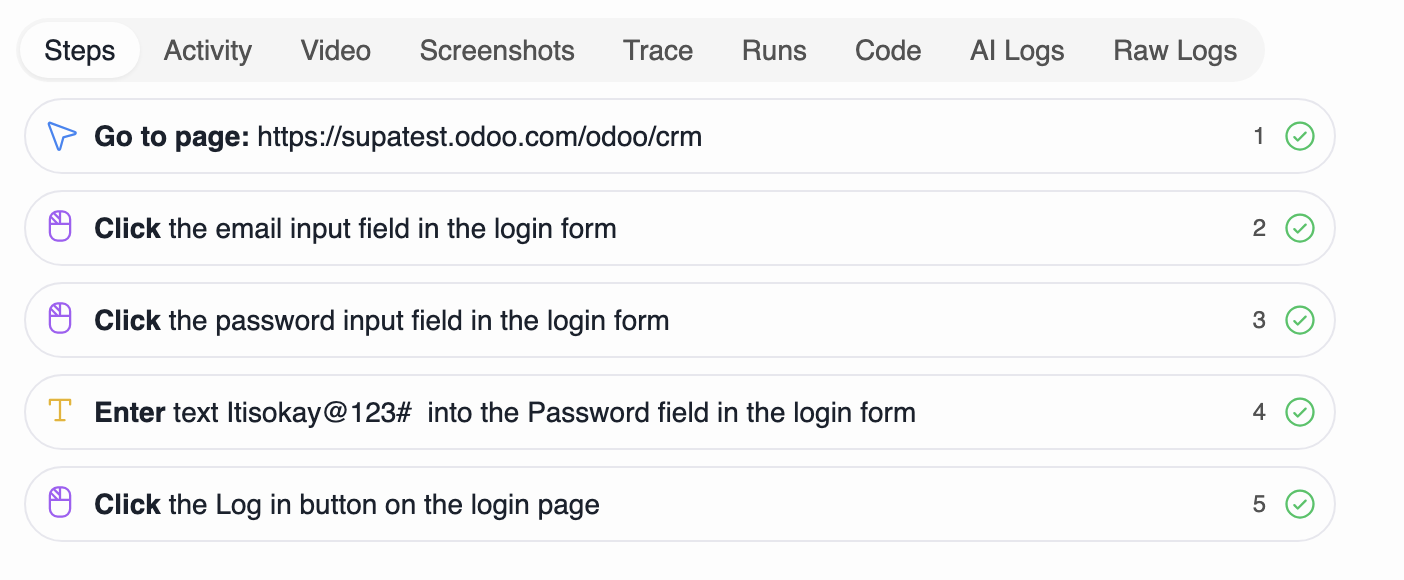

Open any test case to access dedicated tabs for building and analysis:Steps

- Build and edit the test with the visual editor

- Add steps like Navigate, Fill, Click, Verify, Select, Run Python

- Use Expressions

{{ ... }}for Env/Vars/Random values - Run from start or from a specific step

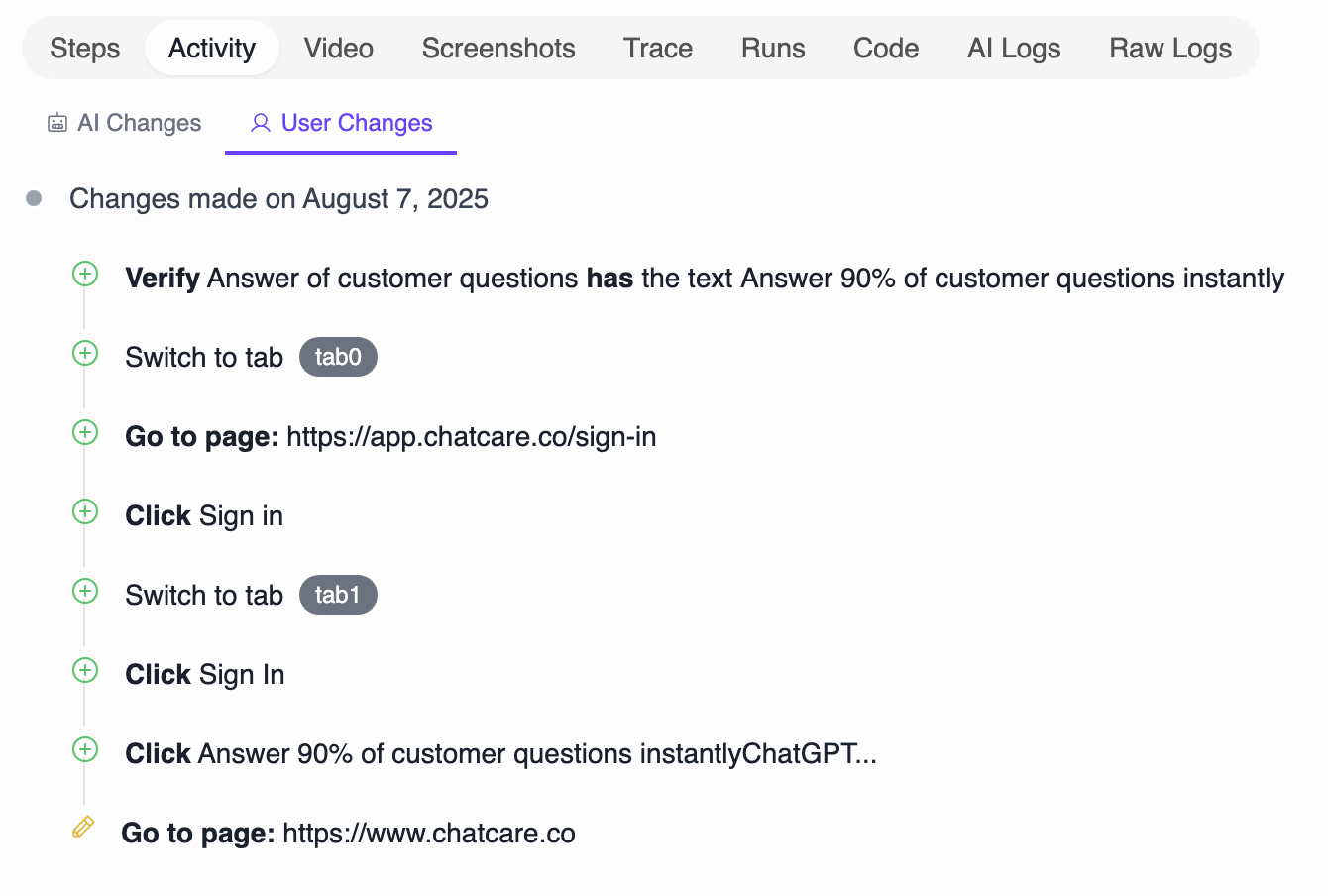

Activity

- Recent actions for this test (edits, runs, healing)

Last Run Report

The Last Run Report tab consolidates all execution assets from the most recent test run. This tab contains sub-tabs for:- Video: Full video replay of the test execution

- Screenshots: Step‑aligned screenshots for quick visual verification

- Trace: Timeline and action traces for deeper debugging

- Logs: Test execution logs with both AI-generated summaries and raw technical output

- AI Execution: Detailed logs from AI-driven steps, including agent decisions, AI assertions, and auto-healing actions

- Inbox: Emails and TOTP codes captured during the test run

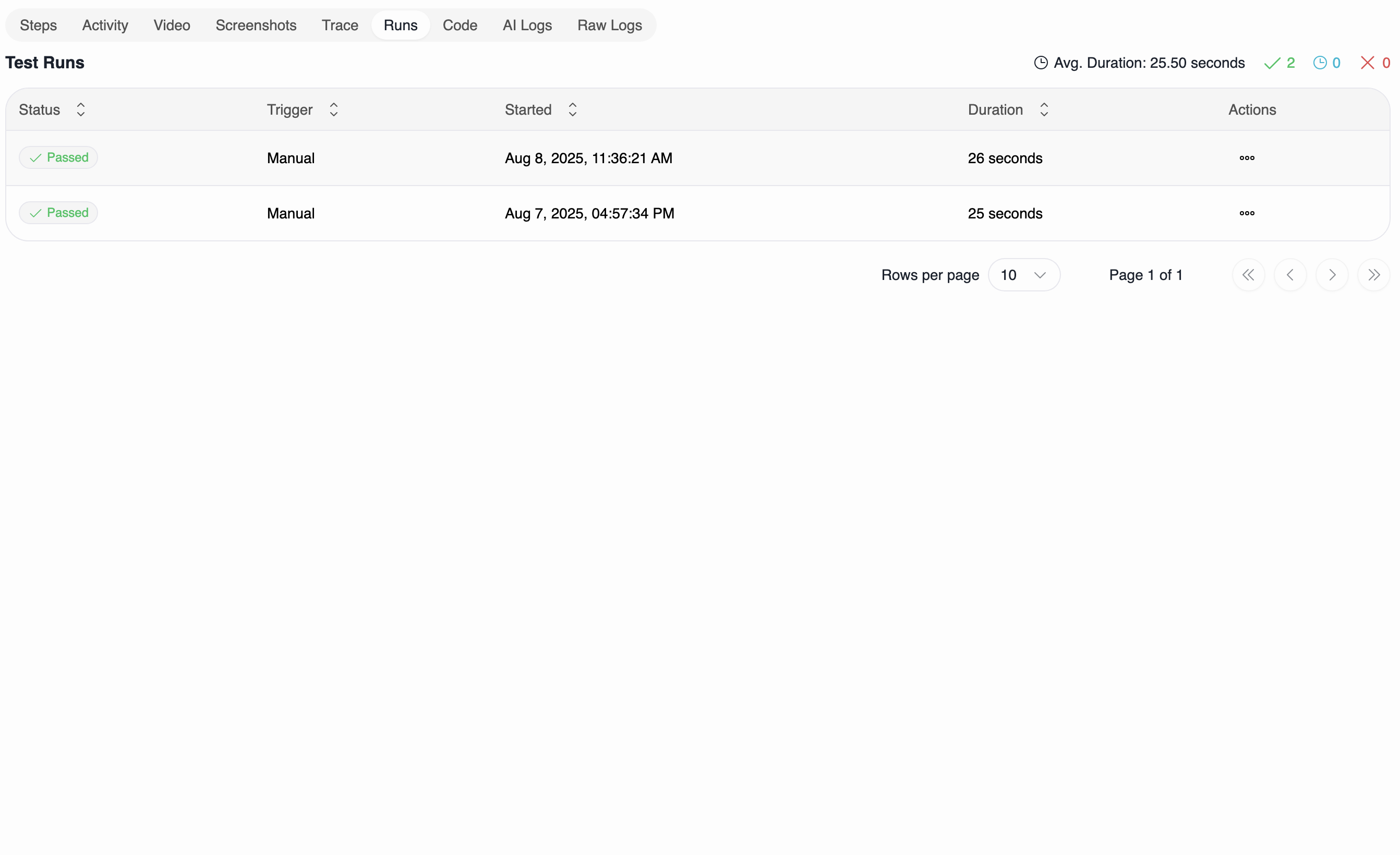

Runs

The Runs tab provides comprehensive test execution history and analytics. It includes two sub-tabs:History

- Complete execution history with status, timing, and trigger information

- Click any run to view detailed information and all execution assets

- Filter and search through past executions

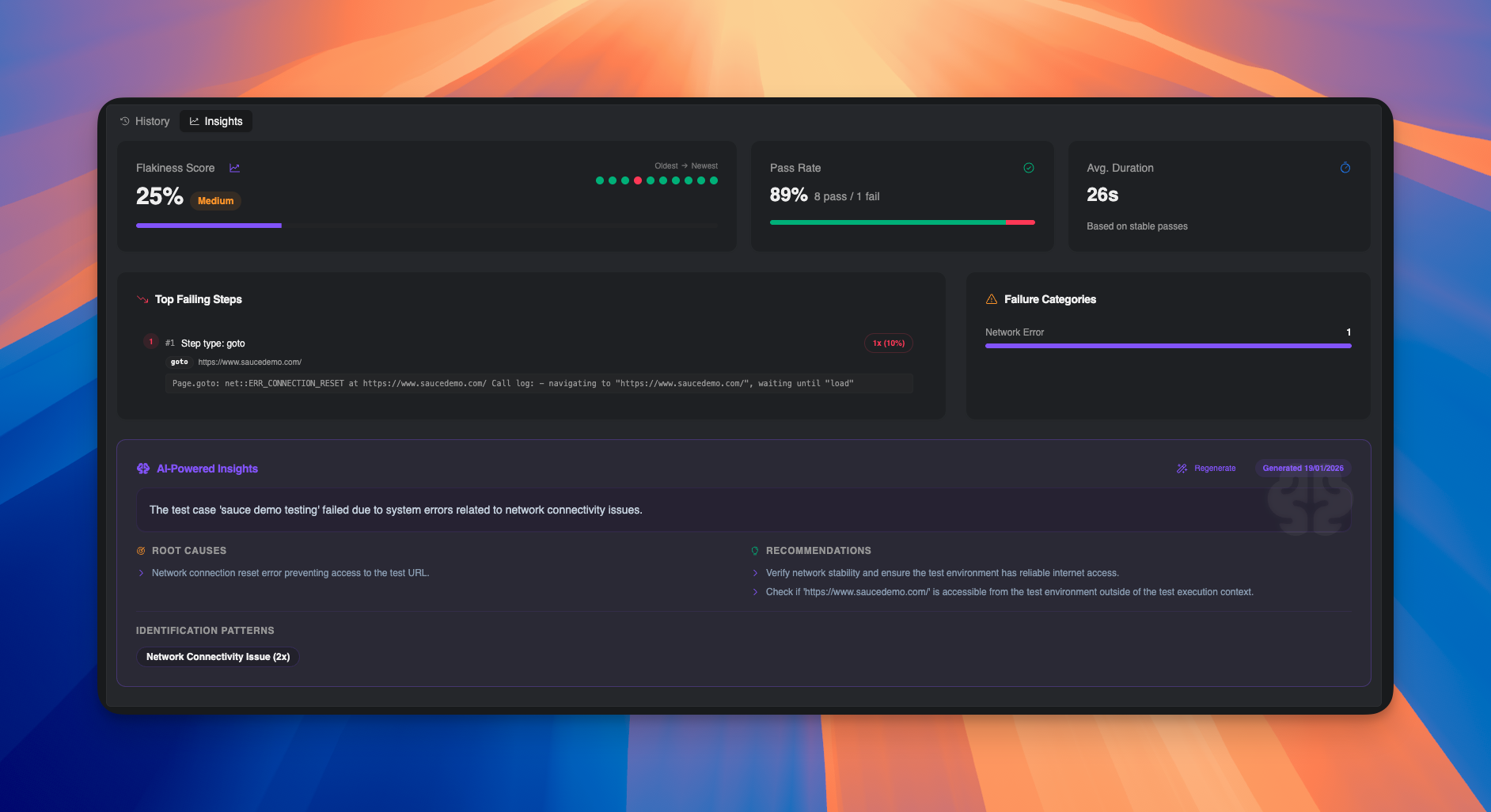

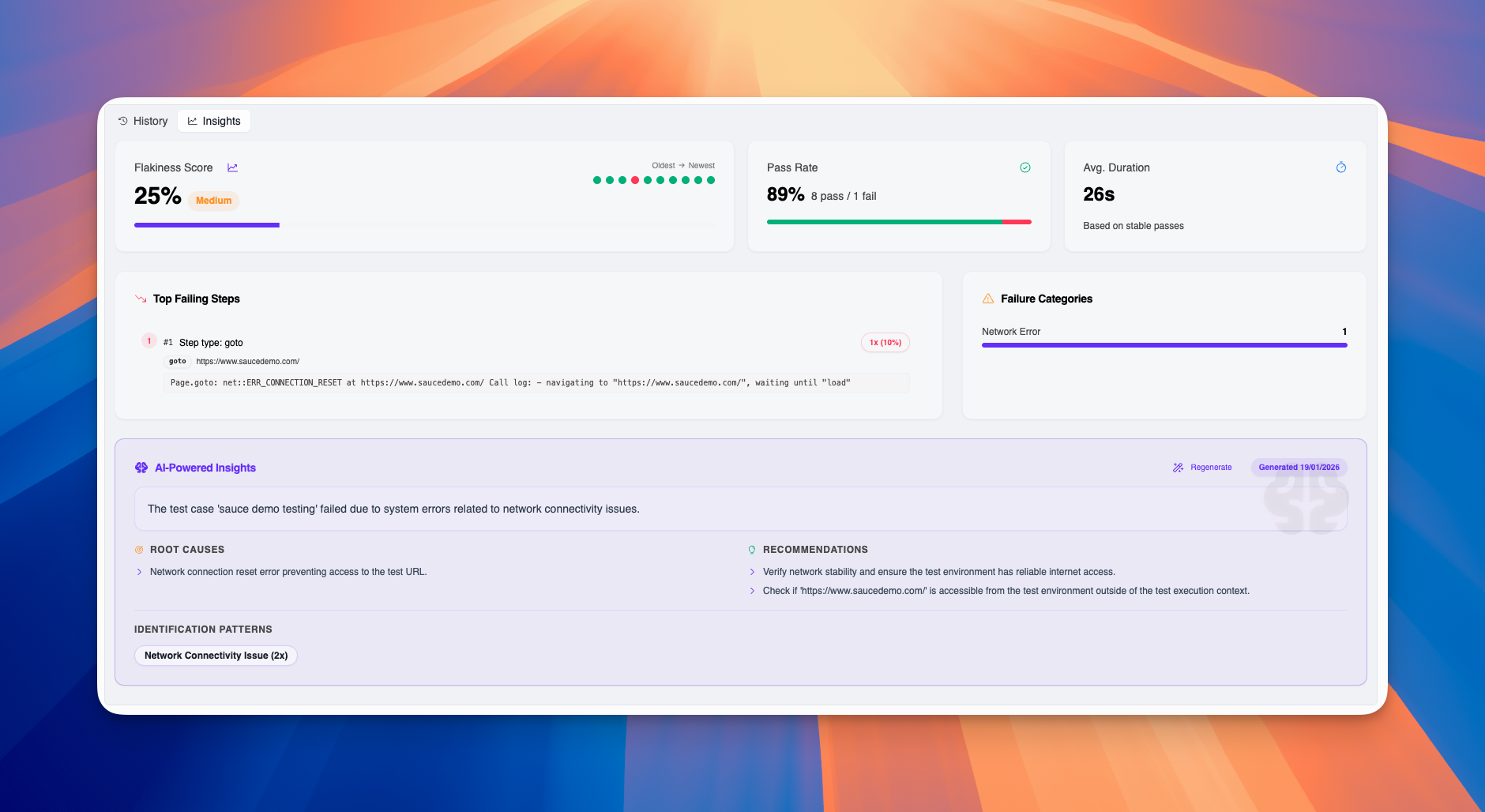

Insights

The Insights tab provides AI-powered analytics and actionable intelligence about your test’s reliability and failure patterns:

- Flakiness Score: Percentage indicating test reliability (0% = stable, 100% = highly flaky) with visual severity indicator

- Pass Rate: Success percentage across recent runs with pass/fail breakdown

- Average Duration: Typical execution time based on stable (passing) runs

- Run Timeline: Visual dot chart showing pass/fail patterns from oldest to newest runs

- Top Failing Steps: Most frequently failing steps with error messages and occurrence counts

- Failure Categories: Breakdown of failure types (Network Error, Timeout, Assertion, etc.) with distribution bars

- Root Causes: AI-identified underlying reasons for test failures

- Recommendations: Actionable suggestions to improve test stability

- Identification Patterns: Common failure patterns detected across runs

- Regenerate: Refresh AI analysis with latest run data

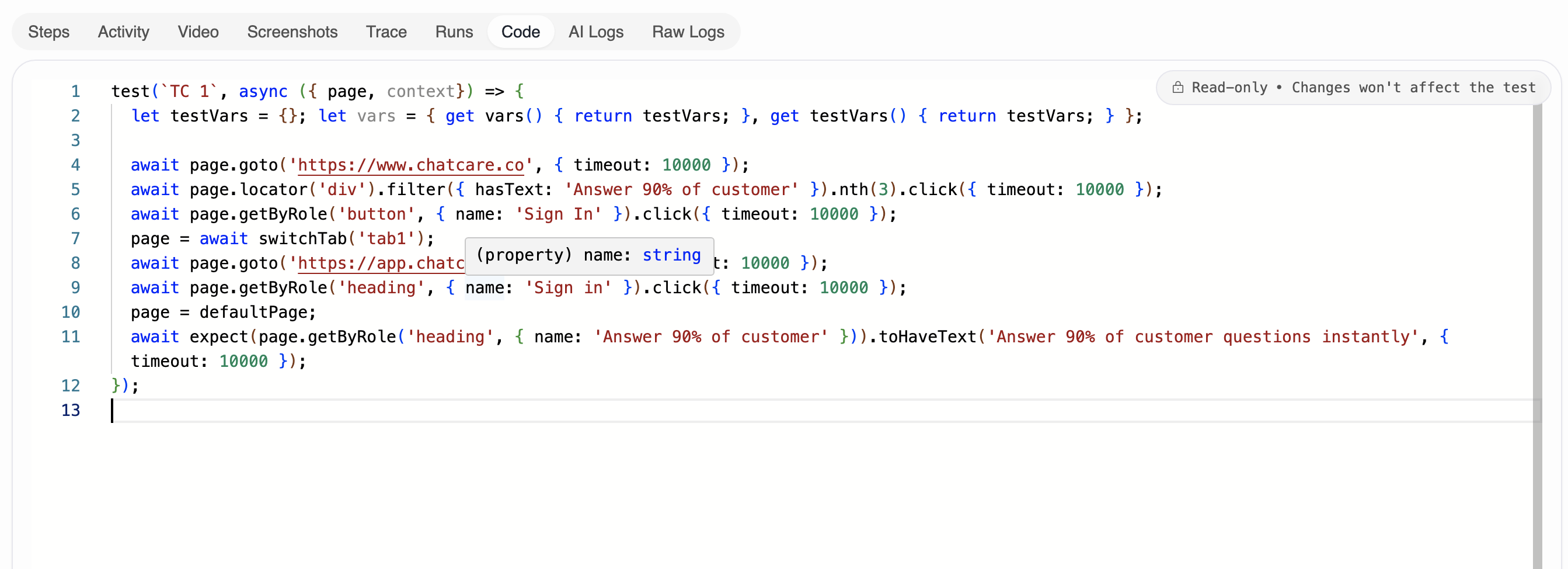

Code

- View the generated code for the current steps

Build tests quickly

- Use the Recorder to capture actions

- Use AI Generate to create steps from a goal

- Insert Run JavaScript for complex logic and saving new variables

- Leverage Expressions in step fields to compose dynamic values

Organization best practices

- Use folders for ownership: Group by team or feature area

- Keep names descriptive: Prefer clear names over abbreviations

- Avoid deep nesting: Two to three levels is usually enough

- Prefer moving over duplicating: Reduce drift by avoiding copies

- Schedule by folder: Plans stay up to date as tests are added

Troubleshooting

- Cannot drop here: Ensure you are dropping onto a folder or the Home area

- Item not moving: You may be dropping back into the same folder; try a different target

- Missing actions: Some actions are not shown for system folders (e.g., Home)